Best Color Space for YouTube, Instagram, and TikTok Exports

This guide explains how to choose a color space and export settings that survive real world playback on YouTube, Instagram, and TikTok. It is intentionally practical and analytical, not a list of magic presets, because the best choice depends on what you shot, how you graded, and what devices your audience uses.

The short answer (so you can ship)

For most creators, the best default export target is:

- SDR (standard dynamic range)

- Rec.709 primaries (gamut)

- A Rec.709 transfer function (gamma) that aligns with typical web and phone viewing

- Consistent levels, and a deliberate choice between full range and legal range

You can absolutely deliver HDR in 2026, but it is a separate finishing path with more ways to fail. If you cannot test, or you need maximum consistency across platforms, SDR Rec.709 remains the safest baseline.

Key concepts (quick definitions)

What a color space actually means

When people say “color space,” they often mean three different things at once:

- Primaries (gamut): the triangle of colors you are allowed to represent

- Transfer function (gamma): how code values map to brightness

- Levels (range): what numeric values represent black and white

If any one of these is mismatched between your edit, export, upload, and playback, you get the classic social video problems: “washed out,” “too dark,” or “colors look off.”

SDR vs HDR, and what platforms actually do

- SDR is the default assumption for most social feeds, and the most reliable when you care about consistency.

- HDR can look great on HDR capable devices, but it introduces tone mapping. Tone mapping is a creative reinterpretation of your highlights and midtones, and different devices apply it differently.

- Even if a platform supports HDR, the user may be watching in SDR mode, on an SDR display, or in an app that forces a conversion.

The takeaway: HDR is not just “more quality.” It is a different delivery format with different rules.

The role of color management in your NLE

Color management is the system that translates between:

- input color space (what the camera recorded)

- timeline color space (what you grade inside)

- output color space (what you deliver)

Modern NLEs can handle this automatically, but only if you set the project correctly. A viewer that looks correct does not guarantee the file is tagged correctly, and tagging matters because many platforms and devices rely on metadata to interpret gamma and primaries.

Platform realities (what the apps and devices do)

YouTube

YouTube is the most forgiving platform for color accuracy, mainly because:

- it supports a wide range of upload formats and bitrates

- it is often viewed in a browser or YouTube app with relatively consistent playback

- HDR workflows are most mature here compared to other social apps

Still, YouTube transcodes everything. Your job is to feed it a master that is clean, correctly tagged, and robust to compression.

Instagram is less predictable because:

- Reels, Stories, and feed video can be processed differently

- compression is aggressive

- phone display features (True Tone, Night Shift, adaptive brightness) are common

Common Instagram complaints:

- shadows look crushed (blacks lose detail)

- mids look darker than expected

- reds and skin tones shift or feel overcooked after compression

TikTok

TikTok is the most “compression forward” environment of the three:

- heavy compression can break gradients and subtle color transitions

- dark scenes suffer easily, and so do very saturated colors

- the audience is overwhelmingly on phones, which means you are competing with variable viewing conditions and display settings

If your video has soft gradients, fog, vignettes, or smooth skies, TikTok is where you will notice banding first.

Recommended export targets (decision based)

Default safest choice for most creators

If you want the highest probability of “looks the same everywhere,” use an SDR Rec.709 delivery.

- Primaries: Rec.709

- Transfer function: Rec.709 (practically, treat it as “SDR web/phone” gamma)

- Bit depth: 10-bit if your pipeline supports it, otherwise a very clean 8-bit with enough bitrate

- Range: be intentional (more on this below)

This is not conservative because it is old. It is conservative because it matches the lowest common denominator across phones, apps, and transcodes.

When to consider HDR exports

HDR exports make sense if:

- your footage is HDR (or wide dynamic range) and you want to preserve highlight detail

- your audience is likely to watch on HDR devices (newer phones and TVs)

- you can test on at least one HDR phone and one SDR device

- you can accept that some viewers will see an SDR tone mapped version

If you cannot test, HDR is a “surprise generator.”

A simple decision tree

- Is the project intended to be viewed as HDR?

- Is the majority of the audience on HDR capable displays?

- Can you test on at least one HDR phone and one SDR device?

- If any answer is no, deliver SDR Rec.709

What “best color space” means for social

- your mastering environment (how you grade)

- the platform’s interpretation (how it tags and transcodes)

- the viewer’s device (how it tone maps and displays)

In practice, “best” usually means “least likely to shift.”

A practical mapping of common delivery options

Levels: full vs legal (why this matters more than you think)

- Full range (0 to 255 in 8-bit terms): common in computer graphics and many NLE exports

- Legal range (16 to 235 in 8-bit terms): common in broadcast and some video pipelines

If you export in one range but the platform assumes the other, you will see:

- crushed blacks (if legal is treated as full)

- washed out contrast (if full is treated as legal)

The best practice is not “always full” or “always legal.” The best practice is “be consistent end to end,” and verify what your export settings actually write.

If you are unsure and you are delivering to social platforms, many creators prefer full range for web-first delivery, but you must ensure your NLE tags it correctly and that you do not add an extra conversion on upload.

Workflow: how to avoid surprises

Step 1: Set up a reliable monitoring environment

Your eyes will lie to you if:

- your display brightness changes automatically

- your phone has True Tone or Night Shift enabled

- you grade at night and export for a daytime mobile audience

Practical checklist:

- disable True Tone and Night Shift while evaluating exports

- set a consistent brightness when reviewing

- if possible, compare on at least two devices (one iPhone class display, one Android class display, or one phone plus one laptop)

Step 2: Manage footage correctly

Identify what you shot:

- standard Rec.709

- log (S-Log, V-Log, C-Log, etc.)

- HDR formats (HLG, PQ)

Then apply the correct input transform. If log is treated as Rec.709 or vice versa, you can “fix it by eye” but your pipeline becomes fragile and harder to predict when exported and transcoded.

Step 3: Choose the right timeline settings

For most social delivery:

- grade in a Rec.709 SDR timeline, with correct input transforms

- keep your highlights and saturation in a range that survives compression

Choose an HDR timeline only when you truly intend HDR delivery, otherwise you will fight tone mapping and metadata.

Step 4: Export settings that matter

Most export settings are not about “more quality,” they are about “less damage after transcoding.”

- Codec: H.264 is widely compatible, H.265 can preserve quality at lower bitrate but is more complex for some workflows

- Bitrate: too low increases banding and macroblocking, too high often gets ignored because platforms re-encode anyway

- Bit depth: 10-bit can protect gradients, especially if your source and pipeline are 10-bit

Chroma subsampling matters, but not in the way many expect. The platform will usually convert. Your priority is to give it a clean file with stable gradients and avoid pushing extreme saturation in small areas.

Mini chart: what usually breaks first on each platform

Step 5: Validate after upload

Never judge by local playback alone.

A reliable method:

- export a 10 to 20 second test clip with difficult material (skin tones, gradients, shadows, saturated reds)

- upload privately or unlisted

- watch on the actual target app and device

- compare against your NLE viewer with the same viewing conditions

Look for:

- black detail (does it crush?)

- highlight rolloff (does it clip?)

- skin tones (do they shift?)

- brand colors (do they drift?)

- gradients (do they band?)

Common problems and fixes

Problem: video looks washed out

Typical causes:

- wrong gamma tagging

- double transforms (you converted to Rec.709, then the app converts again)

- viewing in an unmanaged player while grading in a managed viewer

Fix checklist:

- confirm timeline and export color space match your intent

- avoid stacking multiple “Rec.709” conversions (LUT plus output transform)

- export a test file and check in multiple players

Problem: video looks too dark

Typical causes:

- mastering in a dim environment, then viewing on bright phones

- platform conversion or tone mapping

- mismatch between your intended gamma and the device’s interpretation

Fix checklist:

- compare in the target app on a phone

- slightly lift midtones and shadows for social delivery if needed

- avoid placing important detail in near-black values

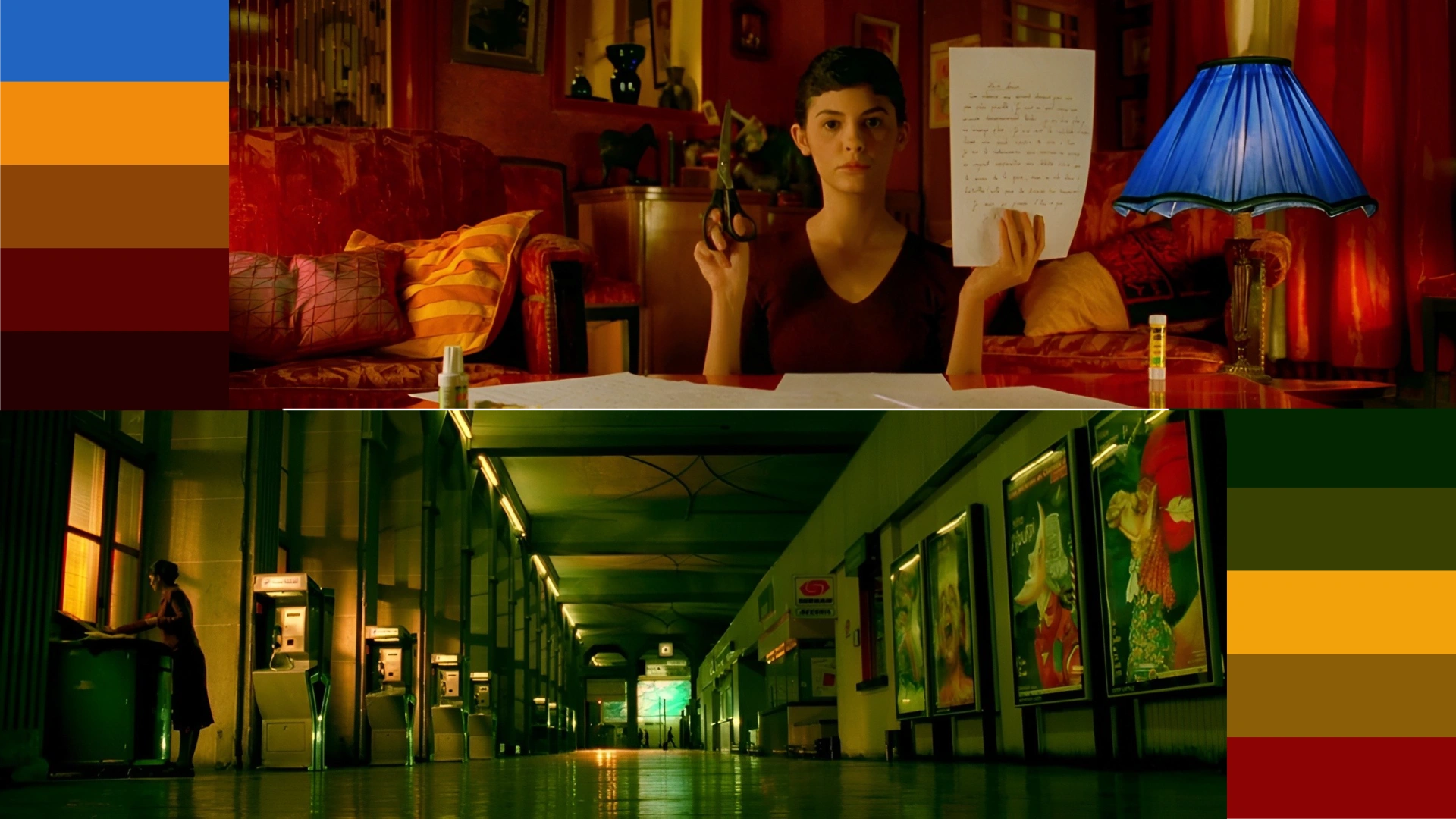

Problem: colors shift (especially reds and skin)

Typical causes:

- wide gamut sources not converted properly

- exporting in P3 or Rec.2020 without a controlled pipeline

- heavy compression exaggerating saturation

Fix checklist:

- convert wide gamut sources into Rec.709 for SDR deliverables

- watch for reds, oranges, and magentas, they are the first to break

- reduce saturation in the most saturated regions before export

Problem: banding in gradients

Typical causes:

- low bitrate

- 8-bit export of a smooth gradient heavy scene

- aggressive compression plus heavy effects

Fix checklist:

- use adequate bitrate for the master

- consider 10-bit export when possible

- add subtle noise or dither in the grade to break up perfect gradients

Practical recommendations by creator type

Fast social workflow (speed first)

- Grade in an SDR Rec.709 timeline

- Export SDR Rec.709 consistently

- Avoid extreme blacks and extreme saturation

- Upload a short test clip when you change cameras, plugins, or export presets

This is the workflow that keeps you shipping without chasing color ghosts.

Quality focused workflow (consistency first)

- Use a color managed pipeline end to end

- Treat your monitoring environment as part of the deliverable

- Maintain two export presets:

- one for YouTube (long form, cleaner compression)

- one for Instagram and TikTok (more robust to aggressive compression)

Conclusion

Once you find a combination that holds up on your devices in the real apps, save it as a preset and stop re-solving the same problem on every project.

Disclaimer : If you buy something through our links, we may earn an affiliate commission or have a sponsored relationship with the brand, at no cost to you. We recommend only products we genuinely like. Thank you so much.

Write for us

Publish a Guest Post on Pixflow

Pixflow welcomes guest posts from brands, agencies, and fellow creators who want to contribute genuinely useful content.

Fill the Form ✏